My former editor and current friend Alan Rusbridger spoke to the House of Lords communications and digital committee last Tuesday (2 March) in his capacity as a member of Facebook’s Oversight Board

The board has been the subject of furious debate since it was announced, and subject to a lot of analysis.

The best read I’ve found is Kate Klonick’s piece in the New Yorker, though I don’t agree with the characterisation of the board as a ‘Supreme Court’ given how limited its remit currently is and the fact that Mark Zuckerberg could unilaterally abolish it tomorrow. We may have the principle that a Parliament cannot bind it successor: Zuckerberg can change his mind twice before breakfast if he pleases.

https://www.newyorker.com/tech/annals-of-technology/inside-the-making-of-facebooks-supreme-court

One of the points that came out of Alan Rusbridger’s committee appearance was that the board is, according to The Guardian report, ‘trying to gain access to the social network’s curation algorithm to understand how it works’. Indeed, Alan is quoted as saying:

At some point we’re going to ask to see the algorithm, I feel sure, whatever that means. Whether we’ll understand when we see it is a different matter.

This is such an unhelpful thing to say that I was prompted to tweet.

The reification of the algorithm really has to stop. It’s not an altarpiece. Ask the people who designed it what their intentions were and whether it has met them. You don’t need a code review.

I’d like to expand on this point.

I believe that asking to ‘see the algorithm’ is unhelpful for two reasons. First, it perpetuates the idea that an algorithm is something solid, that you can take it out and examine it like a spark plug or a dilithium crystal, before plugging back into the complex machine it somehow powers.

And second, it reinforces the idea that ‘the algorithm’ is somehow separate from the organisation and the individuals who have designed it, developed it, deployed it, and who maintain it and benefit from its operation.

In doing this, we are treating an abstraction as if it were a real thing, an example of the reification fallacy.

Of course, there are cases where you can see an algorithm in its entirety. Many of us know how use an algorithm to factor quadratic equations (‘x equals minus B plus or minus the square root of b squared minus four a c, all over 2a’ was seared into my mind at school).

And here’s one of the first programming algorithms I was ever taught:

/* Bubble sort code */

int main(){ int array[100], n, c, d, swap;

printf("Enter number of elements\n"); scanf("%d", &n);

printf("Enter %d integers\n", n);

for (c = 0; c < n; c++) scanf("%d", &array[c]); for (c = 0 ; c < n - 1; c++) { for (d = 0 ; d < n - c - 1; d++) { if (array[d] > array[d+1]) /* For decreasing order use '<' instead of '>' */ { swap = array[d]; array[d] = array[d+1]; array[d+1] = swap; } } }

printf("Sorted list in ascending order:\n");

for (c = 0; c < n; c++) printf("%d\n", array[c]);

return 0;}

This is a bubble sort. Enter a list of up to 100 whole numbers and it will sort them for you. (Of course, tell it you’re going to enter 105 and this code will crash at the 101st… no bounds checking)

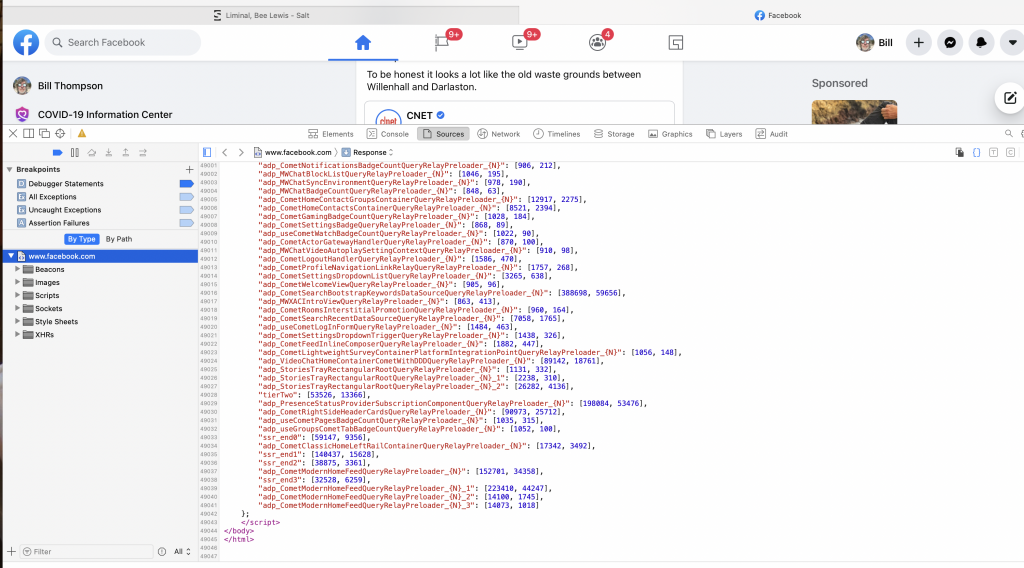

However the thing we keep calling Facebook’s algorithm is not just a big version of this. Facebook is a complex system of interlocking parts, many of which are based on well-trained ML models, that together generate a constantly-shifting bucket of interpretable HTML and Javascript, amounting to 48386 lines the last time I loaded the home page.

This is not something you can ‘show’ to a group of well-intentioned members of your oversight board, even if you wanted to. This is a system that is more complex than a single-celled organism, with more interconnections than a flatworm nervous system and behaviours that are, in many ways, simply unpredictable.

But that doesn’t mean it can’t be examined and held to account. Because what really matters with Facebook is not what the code does, but what it is meant to do. And that intention comes from the designers, the developers, the teams, the managers, the product owners and, of course, the senior management, driven by the desires of the ultimate beneficial owners.

Alan and his colleagues don’t need to do a code review, they need to be able to question all levels of Facebook and ask the simplest question: ‘what are you trying to do here?’

The answers, if honest, might be illuminating. They might also provide a more solid basis for regulation, because I don’t want rules that try to constrain companies at the code level, but principles that will govern the outcomes of their operation and the impact on the world.

The BBC, an organisation with which I am very familiar, has a mission, a set of public purposes, and values that, together, frame all aspects of its activity. We see this most clearly in the editorial guidelines, which are an attempt to translate those values into operational principles and are widely regarded as a model for other organisations.

https://www.bbc.com/aboutthebbc/governance/mission

https://www.bbc.co.uk/editorialguidelines/

While less explicit, the BBC’s engineering practice is bound by the same considerations. And while I’m not suggesting that we should write a Royal Charter for Facebook or other online platforms, we can state clearly what we consider are acceptable objectives for them to aim for in order to be able to operate in a territory, and hold them to account for those objectives.

At the moment we largely do this in terms of avoiding negative consequences, as with broadcast regulation and the online harms regulation. It would be interesting to try to formulate provisions to capture social good, in the way that the BBC’s public purposes do. Imagine asking that social networks ‘should bring people together for shared experiences and help contribute to the social cohesion and wellbeing of the United Kingdom’?

If we tried to regulate at that level, we wouldn’t need to look at the code base unless things were going very badly wrong indeed, because it would be an implementation-level concern.

I think that would be a better outcome.