“We must build AI for people; not to be a person,” Mustafa Suleyman’s recent essay on the importance of avoiding what he terms ‘seemingly conscious AI ‘is a welcome intervention, and much better argued than his recent book, The Coming Wave.

It could be argued that it’s also a good way to put some clear blue water between OpenAI, Anthropic, Google and Meta, all of which are hell-bent on giving us new token-based pals who are fun to be with, and his current employer Microsoft and the trusty but personality-free Copilot sidekick, helpful but in no way a likely boyfriend, but that doesn’t invalidate the argument.

What Suleyman is arguing is that the current design of chatbots is designed to exploit vulnerabilities in human psychology in order to lock themselves into people’s lives, and that doing this is a bad idea and should stop. Because just as certain visual illusions, like those of MC Escher or the Land Effect, are impossible not to see as they are hard-coded into our perceptual apparatus, certain emotional illusions around interactions with non-sentient actors are impossible not to feel.

We failed to address these issues when we allowed social media and social platforms to use the same intermittent reinforcement systems that make gambling so problematic, and Suleyman argues that we have a space within which we could do something about the design of interactive LLM-based services to ensure that they don’t exploit a parallel set of unpatched vulnerabilites.

This isn’t about the exaggerated dangers of the entirely fictional concept of “AI psychosis” which has no basis in clinical observations and, is basically a tabloid wet dream and a great marketing campaign for the snakeoil merchants who sold people the idea of a ‘cure’ for ‘gaming addiction’ and ‘internet addiction.”

Some people are suffering, without a doubt, and we should be do something about this. But there are other dangers that will affect all of us: first, the risk that SCAI locks people into dependency on one provider or one model at a time when there’s nothing really to distinguish between them (as Benedict Evans and Toni Conway-Brown point out cogently in their recent podcast) and becomes a way to cement monopolies; second, that it diverts us into useless conversations about whether these systems need rights, or require us to bring moral considerations to bear when we consider how we use them or, even worse, starts a move to give random token generators some legal protection (never underestimate the desire of politicians to jump on bandwagons).

Let me be clear: this doesn’t mean that the work of the philosophers isn’t important, or that research institutes like Eleos are unnecessary. I love these sorts of conversations and debates and think they are vital. The point where we do get human level embodied intelligence is going to be cataclysmic in all sorts of ways, and deciding how we treat these minds matters. Thinking about it in advance makes sense, and I’ve written about this before.

But we are nowhere near that today, and I’m a strong sceptic about the potential for LLMs to provide the mechanism for achieving it, so it doesn’t feel like the most pressing issue around the negative impact of AI when we see so much evidence of real current harm due to bias, and Mechahitler is seen as a bit of a joke and not a dire warning about the dangers of the tools we are already deploying.

So I’m with him. Using LLMs to build systems that simulate sentience is therefore a very bad idea, for all the reasons he outlines and more. And he is right to say that this bad thing will happen by design, not accident:

“’It is important to point out that Seemingly Conscious AI will not emerge from these models, as some have suggested. It will arise only because some may engineer it, by creating and combining the aforementioned list of capabilities, largely using existing techniques, and packaging them in such a fluid way that collectively they give the impression of an SCAI.”

Time, perhaps, for intervention, although we now it won’t come from the Trump administration where the idea of being a pretend human being without any real emotion, empathy, or understanding of how people live seems to be a qualification for appointment.

It could be something we pick up here in Europe – the EU, that is, not the isolated little rock I call home – as a quick win for regulation based on European values. And I can see it being something that the Chinese authorities would move towards, since dependence on chatbots reduces dependence on the Party. Let’s see how things go.

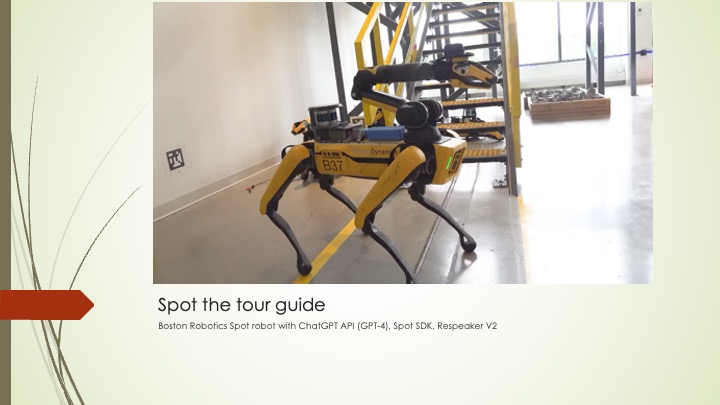

Finally, there’s one troubling aspect of this that Suleyman doesn’t address in his essay – though he may be coming to it, as he’s promised us a series – and that is the rise of the robots. Whatever psychological mechanisms are being exploited by ChatGPT and Claude chatbots to get into our heads are nothing to the impact that a seemingly empathetic, expressive and well-articulated robot with manga eyes and a caring tone of voice will have.

We are so tuned to attribute intention, emotion and reciprocity to creatures that resemble us that once we properly embed LLM-level of engagement in a humanoid shell, we are just going to fall into the pit. Maybe we need an actually enforceable rule of robotics, unlike the clumsy plot device created by Asimov (and broken in almost every story he wrote): no robot shall fake it. Not a friendship, not an orgasm, and definitely not a mind.